4 Lab work n°2

You can download Lab Work n°2: RL for stochastic control problems as a Jupyter Notebook here.1

All the necessary informations are already included in the notebook2. Below is a brief summary of the lab content and some expected results.

4.1 Summary of the lab

Content :

The Lab work is divided into 2 parts :

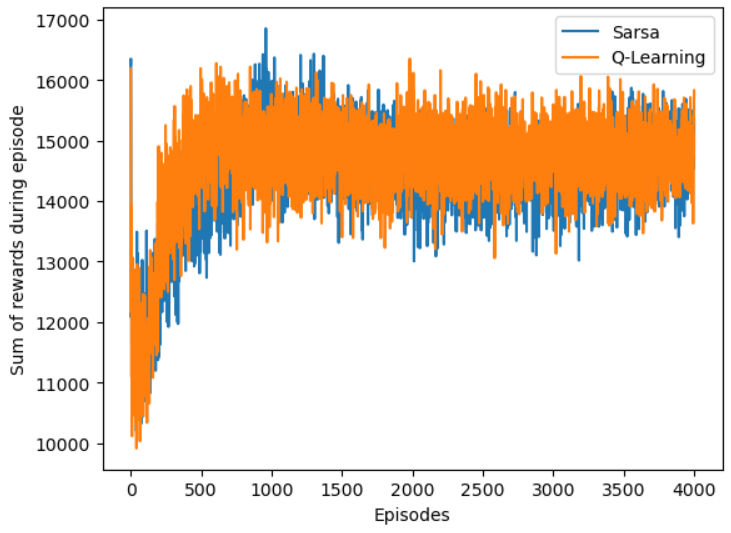

- The first part is devoted to the implementation of some RL algorithms for solving a market impact problem. We give an expected plot for the evolution of the sum of the rewards for the market impact problem

- The second part is devoted to retrieve the results of the course on the optimal policy for the linear quadratic control problems in continuous time, i.e. to recover the associated Ricatti equations and the associated optimal policy.

4.2 Towards the open ended mini-project

The list of potential projects for the Reinforcement Learning for stochastic control problems can be found in the PDF file by clicking here.

If you end-up with a .txt file, download it and rename it as a .ipynb file.↩︎

There will be coding and math questions.↩︎

No PDF submission is required for the lab session answers. The answers should be written directly in the Jupyter notebook and submitted along with the documents associated with the mini-project.↩︎